Recently, quiesce snapshot jobs for some customers kept showing up as failed in Veeam with the error "msg.snapshot.error-quiescingerror".

After exhausting several research options I called VMware Support and we began sifting through event log files on the server as well as looking at the VSS writers and how VMware Tools was installed.

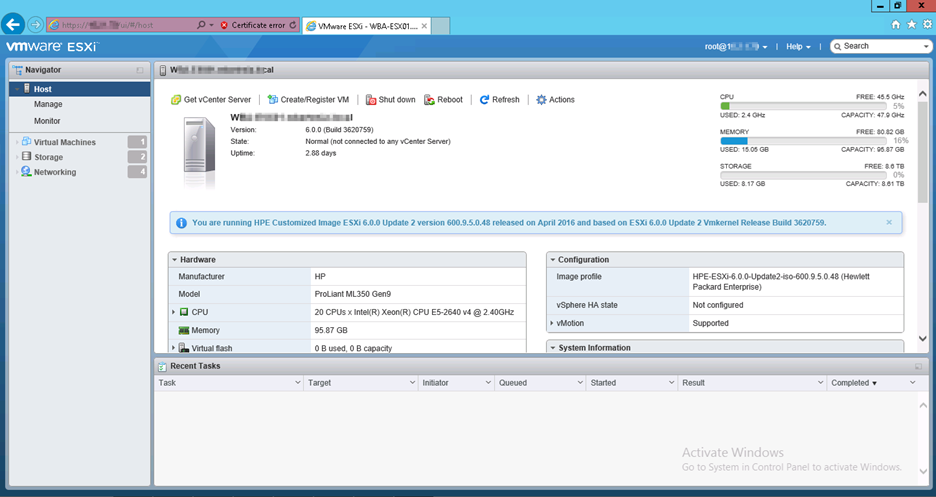

Looking at the log files on the ESX host the server was on led to this article:

https://kb.vmware.com/s/article/2039900

A folder named backupScripts.d gets created and references a path C:\Scripts\PrepostSnap\ which is empty. Therefore the job fails. The fix is found below:

- Log in to the Windows virtual machine that is experiencing the issue.

- Navigate to C:\Program Files\VMware\VMware Tools.

- Rename the backupscripts.d folder to backupscripts.d.old

If that folder is not present, and/or if the job still fails, the VMware services checked.