Microsoft has been emphasizing Office 365 (now Microsoft 365) subscription services since the public introduction in 2011. As a result, the popularity of these services has grown to over 155 million active users as of October 2018, and is gaining new users at over 3 million seats per month. With this growth, on-going marketing, and the increasing acceptance of public cloud services, many businesses and financial institutions are starting to look at Microsoft 365.

In this article, we will highlight several pros and cons of Office 365 you should consider to determine if it's right for your business.

Microsoft 365 (formerly Office 365) encompasses several different products and services, but in this article, we will address these services in two primary areas: user applications and back-end services.

Microsoft 365 User Applications

Most Microsoft 365 subscription plans include Office applications like Word and Excel running on Windows, macOS, and portable devices running iOS and Android. Applications are also available through a web browser but most customers are interested in Microsoft 365 applications as a possible replacement for traditional Office licensing.

What are the primary differences between Microsoft 365 and traditional on-premise Office applications?

- Microsoft 365 is an annual subscription per user or seat. Each user is entitled to run the Microsoft 365 applications on up to 5 devices for the term of the subscription. As long as you continue to pay the annual subscription, you are covered for the Office applications included in your plan.

- Office applications through Microsoft 365 are designed to be downloaded from the O365 portal. There is no license key to determine if you have a valid license. After installation the applications routinely "check in" to the M365 (formerly O365) portal to ensure there is an active account. Because of this check-in process IT administrations must use a specific procedure for mass deployment of M365 applications. Additionally, installation on multi-user servers like Remote Desktop Services and Citrix requires a new approach.

- Microsoft 365 applications are designed to install features and security updates directly from Microsoft when they are released. Legacy patch management solutions like Windows Server Update Services (WSUS) and 3rd party solutions will not work with M365. This can create a challenge for regulated customers who are required to report on patch status. Scanning tools used by auditors to determine patch levels will need the ability to recognize the differences between M365 and traditional Office applications. The M365 update process could also create an issue for Office-integrated applications if a hotfix is released that affects the compatibility of those applications, as there will be no option to block that update from being installed.

- Microsoft 365 applications utilize a feature called Click to Run. This feature, which was originally introduced with Office 2016, provides a streaming method for installing features and patches for Microsoft 365 and Office 2019 applications. Our experience is that Click to Run can use a significant amount of bandwidth if you are installing Office applications or large updates on multiple systems simultaneously.

Is licensing through Microsoft 365 less expensive than traditional licensing?

For most customers the biggest question is: "Is licensing through Microsoft 365 less expensive than traditional licensing?" The answer is "It depends!" Microsoft 365 licensing could be financially attractive if:

- Your business always updates to the latest release of Office.

- You want the flexibility of per user licensing.

- You want to take advantage of the licensing of up to 5 devices for multiple systems, mobile devices, home use, etc.

- You need a simplified update process that works anywhere the PC has Internet connectivity.

- You need to use the browser-based applications for a specific function or employee role.

- You plan to implement one of the Office 365 back-end services.

Microsoft 365 Back-End Services

Microsoft provides several cloud server applications through Microsoft 365 including Exchange Online (email), Skype for Business (voice and messaging collaboration), SharePoint (file collaboration), and OneDrive (file storage and sharing). These back-end services can be implemented individually, or as part of a bundle with or without the Office applications depending on the plan. However, Exchange Online vs. Exchange on-premise is receiving the most attention from our customers.

What should I look for when performing due diligence?

The security and compliance of back-end Microsoft 365 services is not significantly different than any other cloud-based application or service. The areas to research include:

- External audit attestation – SSAE 18 or similar

- Data location residency – production and failover scenarios

- Data privacy policies - including encryption in transit and at rest

- Contracts and licensing agreements

- Intellectual property rights

- Service Level Agreements – service availability, capacity monitoring, response time, and monetary remediation

- Disaster recovery and data backup

- Termination of service

- Technical support – support hours, support ticket process, response time, location of support personnel

A few more things to consider...

As a public cloud service, Microsoft 365 has several challenges that need specific attention:

- The business plans listed on the primary pricing pages may include applications or services that you don't need. All of the various features can be confusing and it's easy to pick the plan that is close enough without realizing exactly what's included and paying for services you will never use.

- Most of the back-end M365 services can integrate with an on-premise Active Directory environment to simplify the management of user accounts and passwords. This provides a "single sign-on" experience for the user with one username and password for both local and M365 logins. Microsoft has several options for this integration but there are significant security implications for each option that should be reviewed very carefully.

- Microsoft has published several technical architecture documents on how to have the best experience with Microsoft 365. The recommendations are especially important for larger deployments of 100+ employees, or customers with multiple physical locations. One of the notable recommendations is to have an Internet connection at each location with a next-generation firewall (NGFW) that can optimize Internet traffic for M365 applications. Redundant Internet connections are also strongly recommended to ensure consistent connectivity.

- The default capabilities for email filtering, encryption, and compliance journaling in Exchange Online may not provide the same level of functionality as other add-on products you may be currently using. Many vendors now provide M365-integrated versions of these solutions, but there will be additional costs that should be included in the total.

- Microsoft OneDrive is enabled by default on most Microsoft 365 plans. Similar to other public file sharing solutions like Dropbox, Box, and Google Drive, the use of OneDrive should be evaluated very carefully to ensure that customer confidential data is not at risk.

- Several other vendors provide Microsoft 365 add-on products that provide additional functionality which may be useful for some businesses. Netwrix Auditor for Microsoft 365 can provide logging and reporting for security events in your M365 environment. Veeam Backup for Microsoft 365 can create an independent backup of your data to ensure it will always be available. Cloud Access Security Brokers (CASB) such as Fortinet FortiCASB and Cisco Cloudlock can provide an additional layer of security between your users and cloud services such as M365.

Discover why the default retention policies of Microsoft 365 can leave your business at risk.

It is certainly a challenge to research and evaluate cloud solutions like Microsoft 365. Financial institutions and other regulated businesses with high-security requirements have to take a thorough look at the pros and cons of any cloud solution to determine if it's the best fit for them.

CoNetrix Aspire has been providing private cloud solutions for businesses and financial institutions since 2007. Many of the potential security and compliance issues with the public cloud are more easily addressed in a private cloud environment when the solution can be customized for each business.

The combination of Office application licensing with back-end services like Exchange Online can be a good solution for some businesses. The key is to understand all of the issues related to Microsoft 365 so you can make an informed decision.

Contact CoNetrix Technology at [email protected] if you want more information about the differences between Aspire private cloud hosting and Microsoft 365.

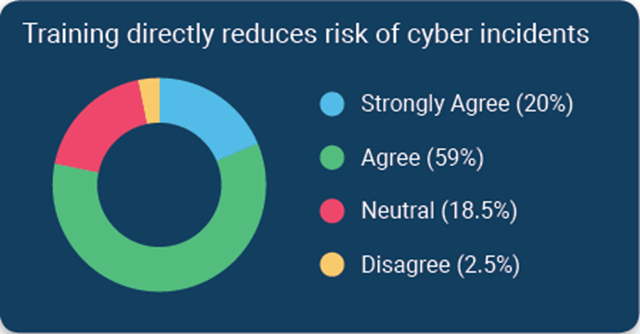

According to a survey of financial institutions conducted by Tandem, a CoNetrix Security partner, 79% of respondents stated they believe cybersecurity awareness training directly reduces the risk of security incidents. Download the 2020 State of Cybersecurity Report for additional trends and insights.

According to a survey of financial institutions conducted by Tandem, a CoNetrix Security partner, 79% of respondents stated they believe cybersecurity awareness training directly reduces the risk of security incidents. Download the 2020 State of Cybersecurity Report for additional trends and insights.